Is Programming Math and Engineering?

Yes! Of course it is. Where the author fails is in narrowly defining math and engineering to traditionally male dominated professions. Historically and across cultures women have dominated domestic crafts. Weaving, knitting, embroidery, tailoring, basket and pottery making are all forms of “thing thinking” that require high-level problem solving, mathematics and a deep understanding of materials. Yet we do not value them at the same level of men’s work. This is partially due to the more temporal and replaceable nature of these crafts but it’s also because we do not value women at the same level we value men. So we call the things men do “engineering” and the work women do “crafts”. The fact that men’s traditional crafts involve stone and metal, and women’s involve clay and yarn is irrelevant to the skills needed or women’s interest in them. Just ask Hobby Lobby.

Weaving involves configuring complex repeating patterns of information into a machine that renders that information to new forms. (Sound familiar?)Is Programming Human Language?

Yes! Of course it is. When we program we have several different audiences. The compiler or runtime is one. Other developers that must read and understand our program is another. Some of the worst code I’ve ever worked on was written by coders with poor communication skills. Naming things is hard, composing code into a readable flow is hard. By applying basic composition skills to our code we can help those that come after us.

Larry Wall, the creator of the massively influential Perl programming language has spoken and written extensively on the connection between human linguistics and programming. Perl itself was informed and designed with linguistic principles.

Is Programming Applied Sociology?

Yes! Of course it is. As all programmers who have been around a while will tell you. Writing the right code is much harder than writing the code right. Programmers must be part of the process of gathering requirements and empathising with customers. We have to work hard to ask the right questions, give meaningful critiques, and understand the problems that need to be solved.

Is Programming STEM?

Maybe? My personal feeling is that part of the problem of getting women and men who do not conform to stereotypical “Big Bang Theory” nerdom is in the public perception and marketing of the career. We are working against a harmful and counterproductive vision of the coder as a socially awkward genius sitting in a dark room. Most programming isn’t writing router software or physics engines. Developing software for humans requires a high degree of general purpose problem solving, teamwork and empathy. Many of the best programmers I’ve worked with did not come into programming through traditional educational paths and I’m not convinced that grouping programming with math and engineering is beneficial to it from a marketing perspective. Perhaps it should be in business, design, or even on it’s own.

We are also working against a toxic and misogynistic culture that drives the women who do want to engage out. The most baffling thing about the manifesto is it’s choice to basically ignore the voices of women who will tell you why they left. It’s not a mystery we need brain scans to find out. Just ask.

]]>

]]>

]]>

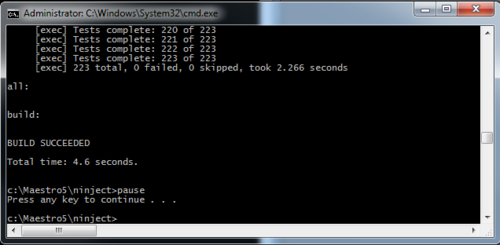

I did a very quick and dirty calculation on the savings in dev time and came up with $200,000 per year for our staff. That would be if every developer ran verify only once per workday (and we know it’s more).

That’s a powerful argument for hackathons or just letting your developers have time to make their projects better.

I did a very quick and dirty calculation on the savings in dev time and came up with $200,000 per year for our staff. That would be if every developer ran verify only once per workday (and we know it’s more).

That’s a powerful argument for hackathons or just letting your developers have time to make their projects better. You see there are two rules about microservices. 1) They need to be isolated and 2) They need to be more isolated than that. In fact they need to be Kim Jong-un isolated. When you run a microservice as it’s own deployable behind it’s own REST interface then it’s easy. You can use whatever libraries, languages, even operating systems you want. However, when deploying a jar inside of another application you are suddenly no longer free. The runtime will demand only one version of your orgs favorite MVC for example, and everyone better be on the same page.

You see there are two rules about microservices. 1) They need to be isolated and 2) They need to be more isolated than that. In fact they need to be Kim Jong-un isolated. When you run a microservice as it’s own deployable behind it’s own REST interface then it’s easy. You can use whatever libraries, languages, even operating systems you want. However, when deploying a jar inside of another application you are suddenly no longer free. The runtime will demand only one version of your orgs favorite MVC for example, and everyone better be on the same page. Several years ago some hipster programmers were frustrated by HTML. Since dogs and babies were qualified to write HTML they weren't able to let everyone else know how awesome they were on 100% of a project. So they invented HAML to protect their smartypants status.

Several years ago some hipster programmers were frustrated by HTML. Since dogs and babies were qualified to write HTML they weren't able to let everyone else know how awesome they were on 100% of a project. So they invented HAML to protect their smartypants status.

My first computer was a TI 99/4a. It had a 16 bit 3 MHz processor and came with 256 bytes of RAM and 16kb of extended VRAM. That was more than enough for any of the programs I wrote at home. I made my own knock offs of games like Centipede, Pong and Bezerk. Just let that sink in for a moment. Centipede, written in BASIC, in under 16kb of RAM. When Bill Gates supposedly said that nobody would ever need more than 640kb of ram we all believed him because that was an INSANE amount of memory. At the time I couldn’t even fathom what I would possibly use that much memory for. You can only run one program at a time anyway.

My first computer was a TI 99/4a. It had a 16 bit 3 MHz processor and came with 256 bytes of RAM and 16kb of extended VRAM. That was more than enough for any of the programs I wrote at home. I made my own knock offs of games like Centipede, Pong and Bezerk. Just let that sink in for a moment. Centipede, written in BASIC, in under 16kb of RAM. When Bill Gates supposedly said that nobody would ever need more than 640kb of ram we all believed him because that was an INSANE amount of memory. At the time I couldn’t even fathom what I would possibly use that much memory for. You can only run one program at a time anyway. I’m a computer programmer. Not exactly the kid of job that encourages a healthy lifestyle. So I came up with a few rules:

I’m a computer programmer. Not exactly the kid of job that encourages a healthy lifestyle. So I came up with a few rules:

]]>

]]>

Frameworks are pretty, they solve all of your problems and their perfect 19 year old bodies sparkle while they seduce you with their smoldering eyes. Don’t be fooled though! Any frameworks that forces you to generate boilerplate after boilerplate that you don’t find useful (or understand) is pure eeeevil. Even worse are the ones that generate these boilerplate classes themselves and inject their unholy poison all over your app. They suck away your flexibility, your ability to test and your ability to be lightweight. They often are quite good at doing something the way they think you should do it but as soon as you need to do something different (about day 3 in) they make your life a living hell.

Frameworks are pretty, they solve all of your problems and their perfect 19 year old bodies sparkle while they seduce you with their smoldering eyes. Don’t be fooled though! Any frameworks that forces you to generate boilerplate after boilerplate that you don’t find useful (or understand) is pure eeeevil. Even worse are the ones that generate these boilerplate classes themselves and inject their unholy poison all over your app. They suck away your flexibility, your ability to test and your ability to be lightweight. They often are quite good at doing something the way they think you should do it but as soon as you need to do something different (about day 3 in) they make your life a living hell.